Image courtesy of Adi Goldstein/Unsplash.

This article was originally published by the Elcano Royal Institute on 14 January 2021.

Theme

It had to happen eventually. Out of all the countries in the world, the hacking back debate has finally entered the political discourse in neutral Switzerland. While it is still too early to determine where the discussion will be heading toward, it is also the perfect time to insert a new perspective on hacking back.

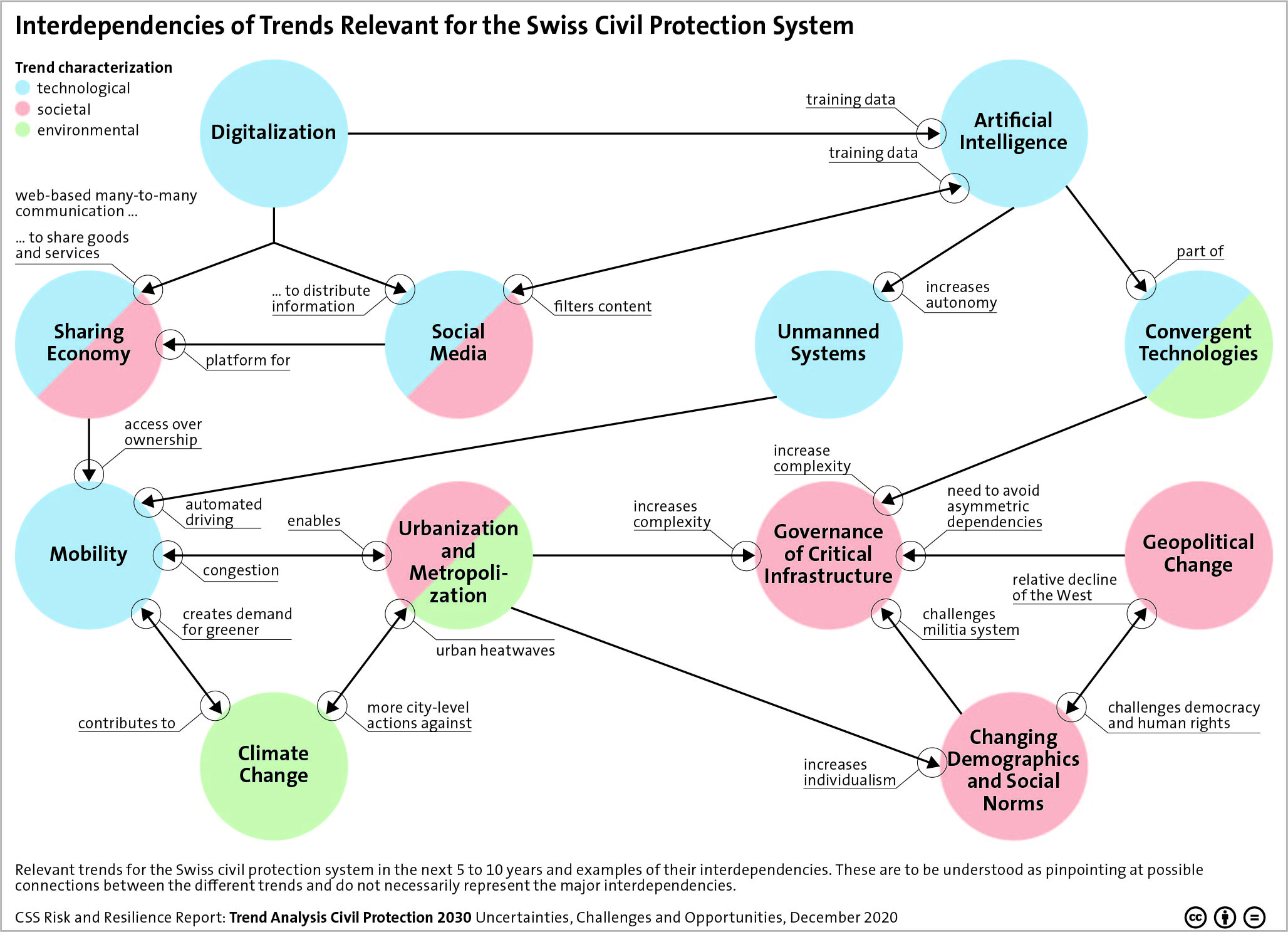

Artificial Intelligence, Digitalization, Climate Change – many overarching trends and developments have the potential to alter the lives of billions of people in the coming years. This graphic maps the relevant trends for the Swiss civil protection system in the next 5 to 10 years and outlines examples of their interdependencies.

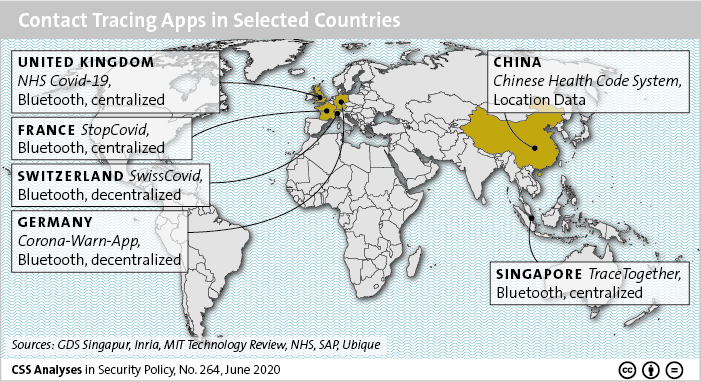

Artificial Intelligence, Digitalization, Climate Change – many overarching trends and developments have the potential to alter the lives of billions of people in the coming years. This graphic maps the relevant trends for the Swiss civil protection system in the next 5 to 10 years and outlines examples of their interdependencies. In the context of the global efforts to deal with the coronavirus pandemic, digital technologies are taking on a role that is both visible and controversial. This graphic maps Contact Tracing Apps in selected countries and offers details on some of its features, such as data storage and technology used for device detection.

In the context of the global efforts to deal with the coronavirus pandemic, digital technologies are taking on a role that is both visible and controversial. This graphic maps Contact Tracing Apps in selected countries and offers details on some of its features, such as data storage and technology used for device detection.