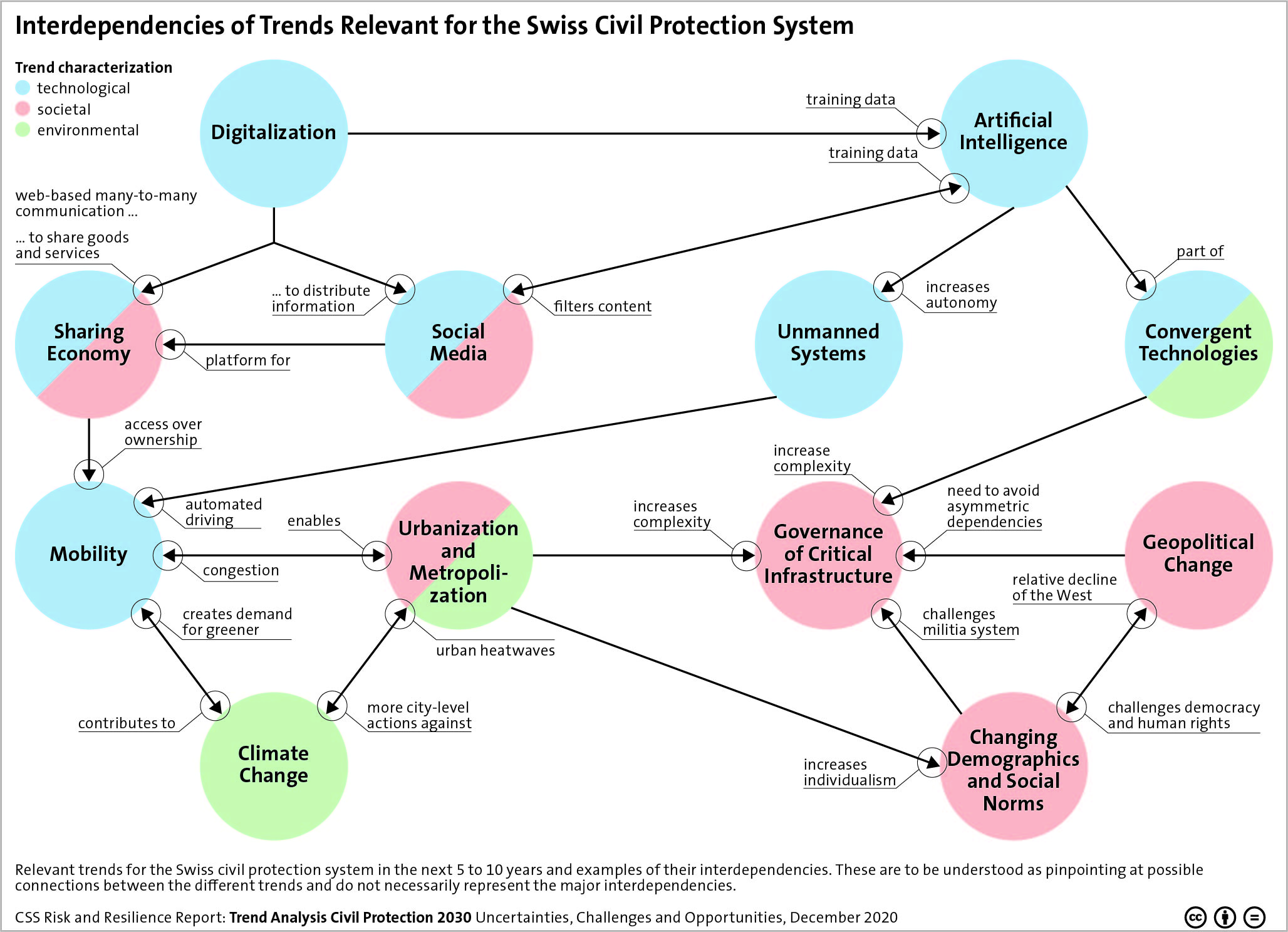

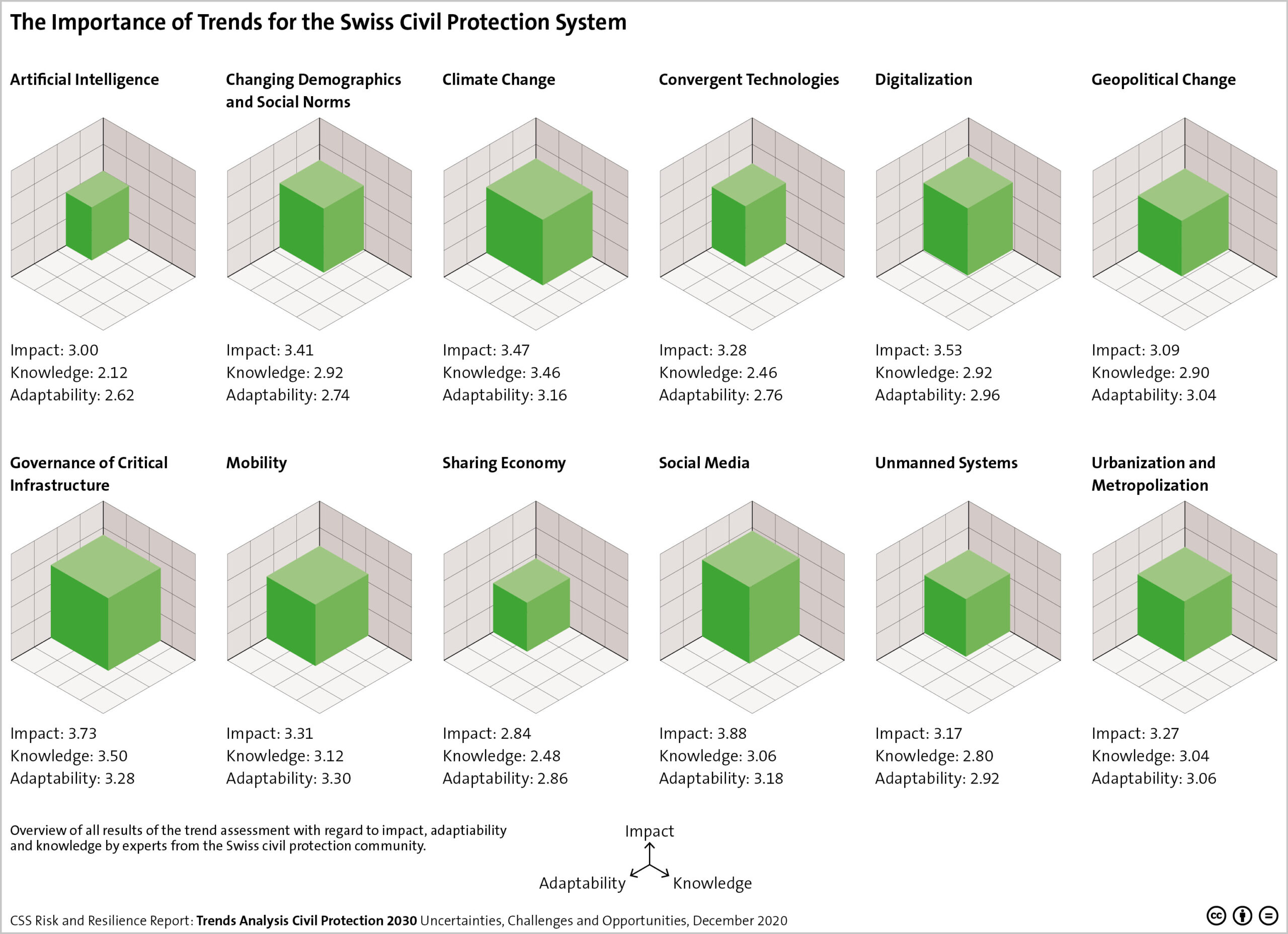

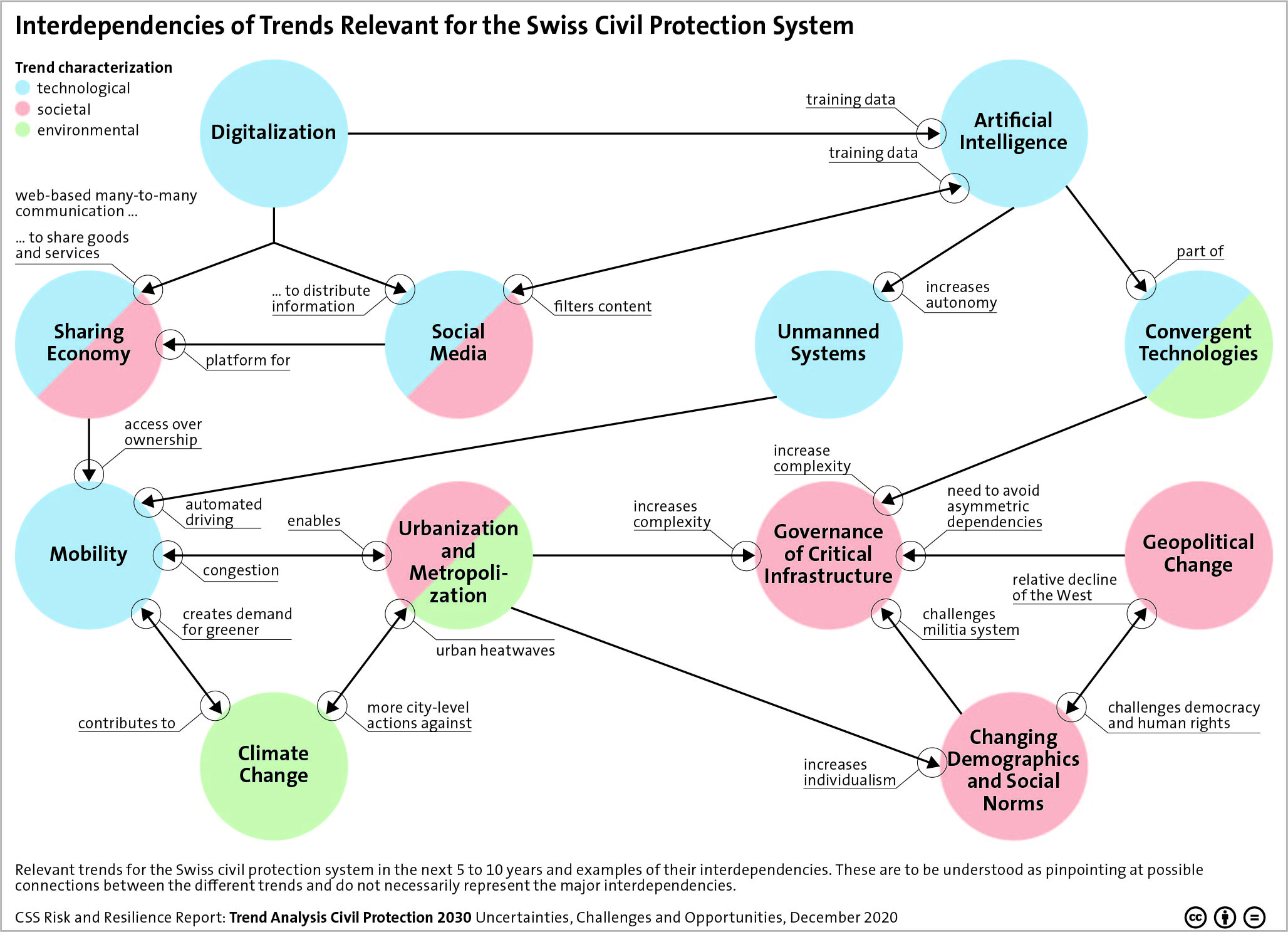

This week’s featured graphic points out the interdependencies of trends relevant for the Swiss Civil Protection System. For more on uncertainties, challenges and opportunities of trends in civil protection, read Andrin Hauri, Kevin Kohler, Florian Roth, Marco Käser, Tim Prior, and Benjamin Scharte’s CSS’ Risk and Resilience Report here.

Artificial Intelligence, Digitalization, Climate Change – many overarching trends and developments have the potential to alter the lives of billions of people in the coming years. This graphic maps the relevant trends for the Swiss civil protection system in the next 5 to 10 years and outlines examples of their interdependencies.

Artificial Intelligence, Digitalization, Climate Change – many overarching trends and developments have the potential to alter the lives of billions of people in the coming years. This graphic maps the relevant trends for the Swiss civil protection system in the next 5 to 10 years and outlines examples of their interdependencies.